Researchers at the U.S. Army’s corporate research laboratory developed an artificial intelligence architecture that can learn and understand complex events, enhancing the trust and coordination between human and machine needed to successfully complete battlefield missions.

The overall effort, worked in collaboration with the University of California, Los Angeles and Cardiff University, and funded by the laboratory’s Distributed Analytics and Information Science International Technology Alliances, addresses the challenge of sharing relevant knowledge between coalition partners about complex events using neuro-symbolic artificial intelligence.

Complex events are compositions of primitive activities connected by known spatial and temporal relationships, said U.S. Army Combat Capabilities Development Command, now referred to as DEVCOM, Army Research Laboratory researcher Dr. Lance Kaplan. For such events, he said, the training data available for machine learning is typically sparse.

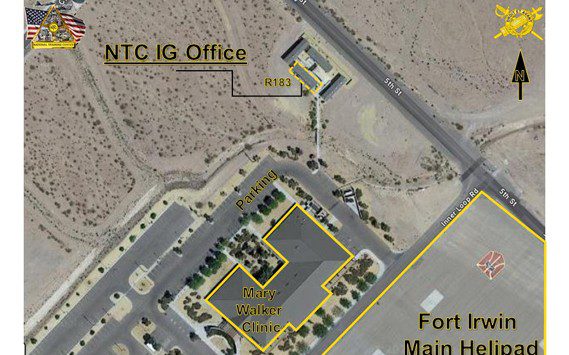

To further understand complex events, imagine people in a crowd taking pictures of an iconic government building. The act of picture taking involves primitive events/actions. Now, imagine that some of the people are coordinating their picture taking for the purpose of a reconnaissance mission. A certain sequence of primitive events such as picture taking occurs. Clearly, it would be good for a force protection system to detect and identify these complex events without generating too many false alarms due to random primitive events acting as clutter, Kaplan said.

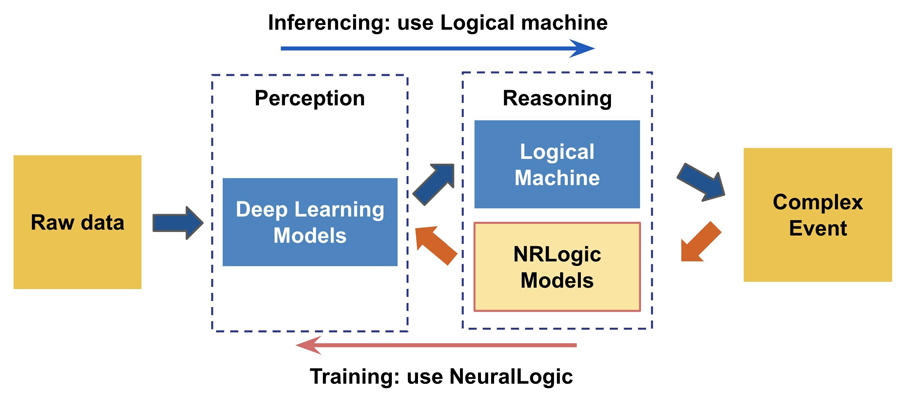

This new neuro-symbolic architecture enables injection of human knowledge through symbolic rules (i.e. tellability), while leveraging the power of deep learning to discriminate between the different primitive activities.

This is accomplished following a neuro-symbolic architecture where the lower layer is composed of neural networks that are connected through a logical layer to form the complex event classification decision, Kaplan said. The symbolic layer incorporates known rules that enable learning the lower layers without having to train labeled data for the primitive activities.

Two different approaches have been developed to enable learning at the neural layers by propagating gradients through the logic layer.

The first, Neuroplex, uses a neural surrogate for the symbolic layer. Second, DeepProbCEP, uses DeepProbLog to propagate the gradients.

Neuroplex was evaluated against pure deep learning methods over three types of complex events formed by a sequence of images, a sequence of sound clips and a nursing activity data set collected from motion capture, meditag and accelerometer sensors.

The experiments and evaluation showed that Neuroplex is capable of learning to efficiently and effectively detect complex events, which cannot be handled by state-of-the-art neural network models.

During the training, Neuroplex not only reduced data annotation requirements by one hundred times, but also significantly sped up the learning process for complex event detection by four times.

Similarly, experiments on urban sound clips demonstrated over a two times improvement in complex event accuracy for DeepProbCEP against a two-stage neural network architecture.

“This research demonstrates the potential for neuro-symbolic artificial intelligence architecture to learn how to distinguish complex events with limited training samples,” Kaplan said. “Furthermore, it is demonstrated that the systems can learn primitive activities without the need for annotations of the simple activities.”

In practice, he said, the initial layers of the neuro-symbolic are pre-trained, but the amount of data to collect and label can be greatly reduced, lowering costs.

In addition, the neuro-symbolic architecture can leverage the symbolic rules to update the neural layers using a small set of labeled complex activities in situations where the raw data distribution has changed. For example, in the field it is raining, but the neural network models were trained over data collected in sunny weather.

The neuro-symbolic learning is also able to incorporate the superior pattern recognition capabilities of deep learning with high level symbolic reasoning.

“This means the AI system can naturally provide explanations of its recommendations in a human understandable form,” Kaplan said. “Ultimately, this will enable better trust and coordination with the AI agent and human decision makers.”

The symbolic layer also enables tellability. In other words, he said, the decision maker can define and update the rules that connect primitive activities to complex events, both at the initialization stage, where a perception module is untrained, as well as during the fine-tuning stage, where a perception module is a pre-trained off-the-shelf model and needs to be fine-tuned to a specific environment.

“This research is able to leverage the advancements in deep learning and symbolic reasoning,” Kaplan said. “The dimensionality of the sensor data whether it is time-series data (e.g., accelerometers) or video is infeasible for pure symbolic reasoning. Similarly, deep learning is unable to learn patterns that manifest over large time and spatial scales inherent in complex events. The hybrid of neuro-symbolic learning is necessary for complex event processing.”

The research directly supports the Army Priority Research Area of artificial intelligence by increasing speed and agility in which we respond to emerging threats, Kaplan said. Specifically, the research is focused on AI for detecting and classifying complex events using limited training data to adapt to changing environments and learn emerging events.

The work also supports network command, control, communications and intelligence by engendering trust between AI and human agents representing different coalition partners by the AI incorporating symbolic explanations and the human agents using symbolic rules to guide the reasoning of the AI, he said.

“Complex event processing is difficult, and the research is still in its infancy,” Kaplan said. “Nevertheless, we have made great advancements over the last two years to develop the neuro-symbolic framework. The research still needs to consider more relevant data sets, and there are still other questions to answer regarding the human-machine interface. In the long term, I am confident that a neuro-symbolic AI will be available to Soldiers to provide superior situational awareness than the available to the adversary.”

This research was recently presented at the virtual International Conference on Logic Programming, and will be featured during the upcoming ACM Conference on Embedded Networked Sensor Systems, or SenSys 2020, scheduled virtually for Nov. 16-19.