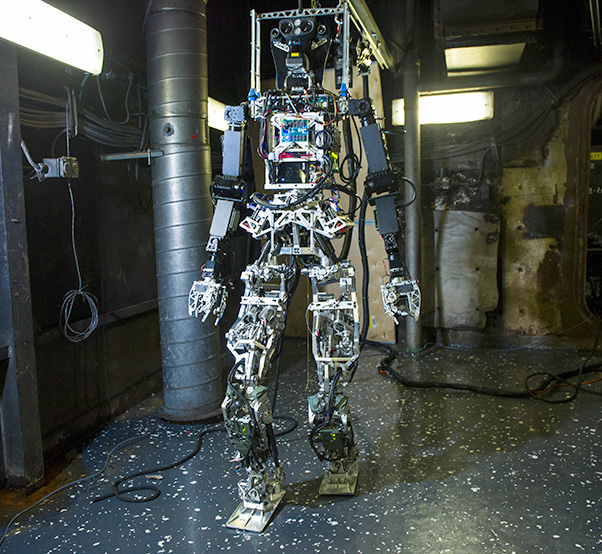

The Office of Naval Research-sponsored Shipboard Autonomous Firefighting Robot (SAFFiR) undergoes testing aboard the Naval Research Laboratory’s ex-USS Shadwell in Mobile, Ala. SAFFiR is a bipedal humanoid robot being developed to assist Sailors with damage control and inspection operations aboard naval vessels.

With support from the Office of Naval Research, researchers at the Georgia Institute of Technology have created an artificial intelligence software program named Quixote to teach robots to read stories, learn acceptable behavior and understand successful ways to conduct themselves in diverse social situations.

“For years, researchers have debated how to teach robots to act in ways that are appropriate, non-intrusive and trustworthy,” said Marc Steinberg, an ONR program manager who oversees the research. “One important question is how to explain complex concepts such as policies, values or ethics to robots. Humans are really good at using narrative stories to make sense of the world and communicate to other people. This could one day be an effective way to interact with robots.”

The rapid pace of artificial intelligence has stirred fears by some that robots could act unethically or harm humans. Dr. Mark Riedl, an associate professor and director of Georgia Tech’s Entertainment Intelligence Lab, hopes to ease concerns by having Quixote serve as a “human user manual,” teaching robots values through simple stories. After all, stories inform, educate and entertain — reflecting shared cultural knowledge, social mores and protocols.

For example, if a robot is tasked with picking up a pharmacy prescription for a human as quickly as possible, it could: a) take the medicine and leave, b) interact politely with pharmacists, c) or wait in line. Without value alignment and positive reinforcement, the robot might logically deduce robbery is the fastest, cheapest way to accomplish its task. However, with value alignment from Quixote, it would be rewarded for waiting patiently in line and paying for the prescription.

For their research, Riedl and his team crowdsourced stories from the Internet. Each tale needed to highlight daily social interactions — going to a pharmacy or restaurant, for example — as well as socially appropriate behaviors such as paying for meals or medicine within each setting.

The team plugged the data into Quixote to create a virtual agent — in this case, a video game character placed into various game-like scenarios mirroring the stories. As the virtual agent completed a game, it earned points and positive reinforcement for emulating the actions of protagonists in the stories.

Riedl’s team ran the agent through 500,000 simulations, and it displayed proper social interactions more than 90 percent of the time.

“These games are still fairly simple,” said Riedl, “more like ‘Pac-Man’ instead of ‘Halo.’ However, Quixote enables these artificial intelligence agents to immerse themselves in a story, learn the proper sequence of events and be encoded with acceptable behavior patterns. This type of artificial intelligence can be adapted to robots, offering a variety of applications.”

Within the next six months, Riedl’s team hopes to upgrade Quixote’s games from “old-school” to more modern and complex styles like those found in Minecraft — in which players use blocks to build elaborate structures and societies.

Riedl believes Quixote could one day make it easier for humans to train robots to perform diverse tasks. Steinberg notes that robotic and artificial intelligence systems may one day be a much larger part of military life. This could involve mine detection and deactivation, equipment transport and humanitarian and rescue operations.

“Within a decade, there will be more robots in society, rubbing elbows with us,” said Riedl. “Social conventions grease the wheels of society, and robots will need to understand the nuances of how humans do things. That’s where Quixote can serve as a valuable tool. We’re already seeing it with virtual agents like Siri and Cortana, which are programmed not to say hurtful or insulting things to users.”

Riedl’s research falls under ONR’s Science of Autonomy program, which explores human interaction with autonomous systems.